Software Wasn't Built for AI Agents, but They're Becoming the Users Anyway

Published at April 29, 2026 ... views

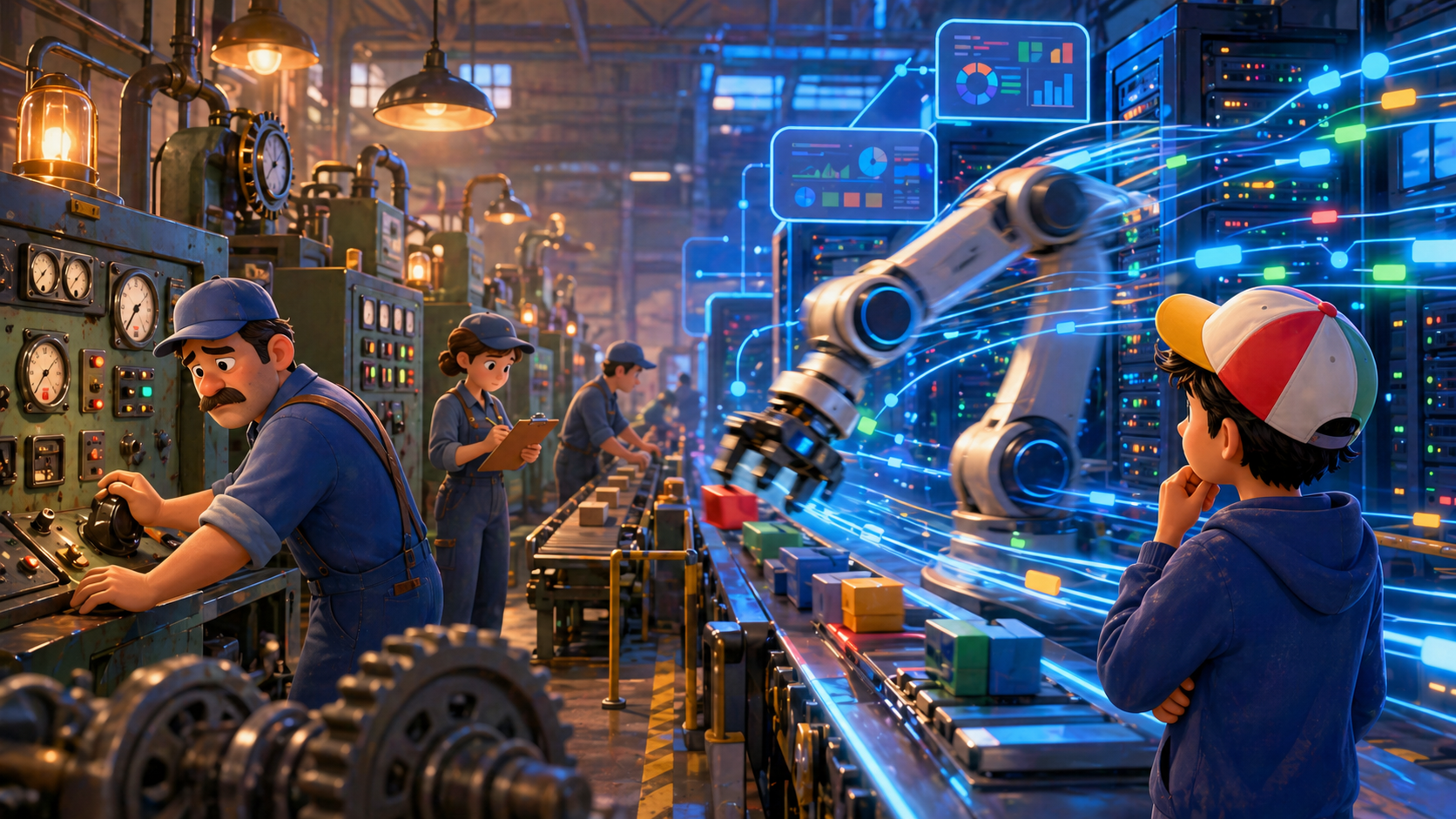

For most of my time around software, the user was either a human or another application built for humans. Lately I've been noticing a third user quietly taking over the room — AI agents.

I just spent a few hours with talks from Google Next '26. One from Ali Furman at PwC on how Gen Z and Gen Alpha are reshaping commerce. Another from Yasmeen Ahmad at Google and Farhan Thawar from Shopify on how the entire data and software stack is being inverted around agents. On the surface they're about different things. But the more I sat with them, the more they felt like two halves of the same story.

On one side, AI is becoming the front door to commerce. On the other, the whole architecture of how software gets built and consumed is being rewritten around something that isn't human.

So in this post, I wanted to pull those threads together. Not as a technical deep dive — more as a way to think about what changes when the user we've been designing for, the human clicking buttons, isn't the one driving the system anymore.

The shift on the demand side is creating real pressure on the supply side. If shoppers find your products through an AI agent, the agent — not the human — is your customer. And agents don't behave like humans.

The cart is now the family group chat

Ali Furman opened with two examples. An eleven-year-old adding lip gloss to her mom's cart online. A thirteen-year-old ordering dinner from a delivery app. In a lot of cases, the parents don't even realize what's happening.

PwC's research backs that up. Nearly one in four Gen Alpha kids — fourteen and under — are already ordering through shopping apps independently. Thirty-eight percent of young teens use AI tools daily, mostly for fun.

I liked his framing for this:

The cart is now the family group chat.

A digitally fluent kid is shaping household demand long before they have a credit card or a job. The brand designing for "the parent" is missing the actual decision-maker. That reframed how I think about who a "buyer" really is in a home.

The feed is now the shelf

The second shift is even bigger. AI is becoming the place people go to discover products in the first place.

One stat genuinely surprised me: generative AI referral traffic to retailers grew over 700% year over year. Sixty percent of consumers already use AI platforms to search and discover brands and products. We're entering what Furman called — where AI doesn't just help you shop, it shops for you.

You search. You scroll. You click. You decide — across many UIs.

If you're a brand, the implication is uncomfortable. The buyer might never visit your site, see your homepage, or scroll your beautifully designed feed. The AI sees a structured representation of your products and decides whether to surface you. So the new SEO is something like machine-readability — and the new shelf isn't a page, it's a model's reasoning step.

That alone would be enough to think about. But it's also the front edge of a much bigger change happening underneath.

Architecture wasn't built for this

This is where Yasmeen Ahmad's talk really clicked for me.

Her observation: for the last decade or two, we've built data and software for two consumers. The human, and the application built for humans. Both are slow and deterministic. A human takes seconds to click, minutes to read a chart, days to decide on something. So we built dashboards, UIs, buttons — basically slowing technology down to meet human speed. A 45-second SQL query was fine; the human went and got coffee.

Applications, in turn, were built on rigid APIs. Once those APIs existed, they became frozen. Anyone who's worked on a long-lived platform knows this — change an API and everything downstream breaks.

Now look at the same picture if the user is an agent.

Yasmeen's line that stayed with me:

Agents don't query, they reason.

An agent doesn't click once. It loops. It tries, verifies, disambiguates, retries. Where a human took one click, an agent might hit an API ten or twenty times. Recent web traffic stats from API gateways already show this — massive violent spikes that aren't because humans got faster. They're because agents came online. Ten to twenty times more API calls per "click," multiplied by multi-step reasoning, ends up being roughly a hundred times more compute per request.

That's the part where you realize the old architecture isn't going to bend gently — it's going to break.

Why the old stack breaks

The more I sat with this, the more I saw the failure modes stack up. They aren't one issue. They're a category.

The interesting part isn't that any one layer is "the problem." Every layer was tuned for human-speed deterministic users. When you change the user, every layer is suddenly out of spec.

Yasmeen framed Google's response as a three-layer inversion: the reasoning engine, orchestration, and the trust fabric. Each one is a rethink of what that layer of the stack should actually do.

The reasoning engine

This is where the interface, data, and compute have to start working together as one thing.

The interface part was the line I most enjoyed:

CLIs are sexy again.

For years the industry was moving up the stack — prettier UIs, smoother flows, less friction for humans. Agents flipped that. Agents speak code. Agents prefer terminals, scripts, and direct API access. When an agent hits a broken API, it can write a patch and execute a workaround. When it needs a tool that doesn't exist, it can drop into a sandbox, write Python, test it, and delete the code afterward. That's a very different "user" than the one we've been designing for.

The data part is even more interesting. The bet for the last decade was on the SQL engine — squeezing out the most performance and efficiency from relational queries. Agents don't operate cleanly on SQL. They operate on intent. Intent needs more than rows and columns. It needs vector embeddings for similarity, graph for relationships, relational for structured records, and unstructured handling for text and media — all in one place, with zero hops.

A make-it-real example from the talk: Make My Trip, India's biggest online travel agency, was trying to build a voice-activated, multimodal itinerary builder. They had MongoDB for unstructured data, Quadrant for vectors, Elasticsearch for search, and a relational system for the rest. You can't build a fluid interactive experience on a fragmented stack. They moved to Spanner with vector, graph, AI inferencing, and traditional SQL all in one engine, and cut operational complexity by 75%.

The compute layer rounds it out. Agents think in invisible tokens. One command can spawn ten thousand thinking tokens as the agent walks a decision tree. Chips designed for human-paced work become bottlenecks for multi-step reasoning loops. That's the case for separating inference from training and compressing memory — to keep the reasoning loop from hitting a traffic jam.

The shift in mindset, in one line: from a stack built for storage efficiency to a stack built for reasoning efficiency.

Orchestration: from instructions to intent

If the reasoning engine is the engine, orchestration is what you do with the speed.

The last decade ran on imperative code — task by task, click by click. The new pattern is intent-driven engineering. You define the outcome and let an AI figure out the path. And not just one AI — a swarm of specialized agents activated to complete that outcome together.

The example that made this concrete: imagine the Strait of Hormuz closes, with a parallel blockade in the Red Sea. Global shipping has to suspend transit and reroute via the Cape of Good Hope, adding fourteen days. In the old world, a 72-hour human sprint kicks off — war room, analysts pulling CSVs, a leader walking into a room three days later with a slide deck. By then the cargo space is gone and millions are already lost.

In the new world, the intent is just: preserve margins on impacted cargo.

A 72-hour human sprint, compressed to under three seconds. That's the part where the abstract idea of "agentic" stopped feeling like a buzzword to me. It's a structural change in how organizations can react.

But Yasmeen made an important point. The model alone doesn't get you there. The model is the engine. To turn the model into real outcomes, you need a . She compared it to the early internet — the network was technically there, but it was unusable until the web browser made it tangible. The agentic harness plays the same role for these models.

The harness is what lets a swarm actually orchestrate against a real-world goal, instead of just generating text about one.

A second example made this even clearer. Infinite, a decentralized finance platform, runs an agentic swarm whenever a human asks for a financial strategy. A discovery agent maps global liquidity risks. A risk agent audits smart contracts in milliseconds. An execution agent drafts the routing path to minimize price slippage. A verification agent watches the whole chain and is ready to kill the trade if the math changes. Twenty minutes of human work in multiple systems, compressed to a single instant action.

The trust fabric and the million-agent problem

Once you have swarms, the next obvious question is how you govern them.

This is where it stops being a productivity story and starts being a governance one. Yasmeen described what she called the million-agent problem. Large enterprises are heading toward 100,000 to 500,000 agents — quickly, a million. Tracking what each one does, evaluating whether it does the right thing, and meeting regulatory requirements at that scale doesn't fit any pattern from traditional software.

Two ideas from her talk reframed how I think about this.

The first: in the old world, evaluation was a gate. If your code passed unit tests, it shipped. In the agentic world, evaluation is a continuous discipline. She drew the analogy to drug discovery — passing lab tests isn't enough; you watch the drug after launch for side effects that didn't show up in trials. Agents are the same. Their behavior in the wild can drift, and you need observability that runs forever.

The second: hard limits, in the form of a two-out-of-three rule.

An agent that reads your sensitive data, executes code, and acts autonomously could read your financials, write a script, and email it to a competitor. So the system needs a block — a guardian. And the interesting part is that the guardian doesn't have to be a human anymore. It can be another agent.

Deutsche Telekom did exactly this. They built a guardian — an agent swarm that monitors network traffic and proposes real-time changes to 5G networks (say, when a concert is in town). Before any change goes live, the guardian evaluates whether the autonomous action is safe. They cut resolution times for major network events from several hours to under a minute — a 95% improvement, with zero compromise on security.

Trust as a feature, enforced by another agent. That's a pattern I expect to see a lot more of.

What Shopify is actually doing

The fireside chat with Farhan from Shopify made all of this concrete.

The first thing that stuck with me was a story he told about playing with an agentic harness on top of Claude — the kind of LLM-plus-tooling setup he could run on his own machine. He asked it to set up a Friday-night dinner reservation for him and his wife, every week, at 7pm. The model didn't have a tool for that. So it built one. It asked for a Twilio API key, wrote a voice server, called him to test it, hit a half-duplex bug, deleted the code, rewrote it as full-duplex, called him again, then changed the voice when he didn't like it.

That sequence reframed the gap between "AI as chatbot" and "AI as user of software" for me. A chatbot answers your question. An agent builds the missing tool to act on it.

Inside Shopify, two systems show what this looks like at scale.

River is their internal agent that reads Slack, GSuite, GitHub, customer support — all the company's systems — and answers questions across them. Two design choices stood out. River only operates in public channels, so anyone can see what other leaders are asking. And when River is wrong, someone corrects it in the public channel, and every future query benefits from the correction.

That's a small change, but it reshapes how knowledge flows. Five people are no longer holding five outdated answers to the same question. Once one person corrects the agent, everyone gets the updated answer next time.

Pulse is the merchant-facing version of the same idea. Instead of waiting for a merchant to ask a question, Pulse runs as a heartbeat on each store. It might quietly notice your photos are low resolution, or that your best-selling product has been out of stock for a week, or that a theme element is slowing down your site and hurting conversion. Then it tells you. The agent comes to you instead of waiting to be summoned.

The data architecture insight underneath both was the part I liked most. Farhan made the case that you don't have to migrate everything to one system before agents can use it. Shopify has BigQuery, MySQL, Yugabyte, and Spanner running in parallel. They put servers (and later, skills) in front of each one. The agent doesn't care that the data is fragmented — the skills layer makes it look like one fabric.

That's a much more pragmatic path than "rebuild the data layer first, then add agents." It also explains why MCP servers and skills have suddenly become foundational — they're the seam between rigid old systems and agents that need to act across them.

The deeper shift

Stepping back from the specific examples, what kept getting clearer to me was that we're not just adding AI to existing software. We're rebuilding the stack so the primary user of that software isn't a human anymore.

Each layer of the stack is being inverted around the agent. The interface layer (CLIs over UIs). The data layer (multi-modal engines over SQL alone). The compute layer (inference-optimized silicon). The governance layer (continuous evaluation over one-time gates). And on the consumer side, the demand for this is already here. AI is the new front door for commerce, not a future story.

What I find genuinely useful is that this isn't just a model conversation. It's an architecture conversation. The model is the engine. The harness is the browser. The new winners aren't the companies buying the most software licenses — they're the ones whose systems are most legible, most callable, and most trustable to a non-human user.

A few things I'm taking away

- The user we design for is changing — agents are quietly becoming the primary consumer of a lot of software

- Agents don't behave like humans: they loop, reason, and hit APIs ten to twenty times for what used to be a single human click

- That difference compounds into roughly a hundred times more compute per request, which is enough to break old architectures

- SQL alone isn't enough anymore — reasoning engines need vector, graph, relational, and unstructured working in one place

- We're moving from "SaaS" toward "agents as a service" — the interface layer is being demoted, not deleted

- Orchestration is shifting from imperative instructions to intent-driven swarms with a harness around them

- Trust is becoming a continuous discipline instead of a one-time gate, and the easiest guardian for an agent is often another agent

- The two-out-of-three rule is a useful guardrail — read sensitive data, execute code, work autonomously, pick at most two

- CLIs feel sexy again because agents speak code, not buttons — and they will write the missing tool when none exists

- Building infrastructure beats building features when you don't yet know what use cases agents will unlock

- Generative AI referral traffic to retailers grew over 700% year over year, which means being machine-readable is starting to matter as much as being SEO-friendly

The thing that stayed with me most is that last one. We've spent a long time optimizing software for human attention — pretty UIs, intuitive flows, low friction. The most important "consumer" of what we build next might never see a single pixel we render. The brands and platforms that adapt fastest probably aren't the ones with the prettiest designs. They're the ones whose APIs, schemas, and content are most legible to a machine that reasons. That's a strange and slightly humbling thought to sit with.

Sources

- From algorithms to agents: How Gen Z, Gen Alpha, and AI are rewiring commerce — Ali Furman, PwC (Google Cloud Next '26). Source for the "cart is now the family group chat" framing, Gen Z / Gen Alpha shopping behavior, the 700% YoY generative-AI referral growth, and the term agentic commerce.

- An inside look at the evolution of agent development — Yasmeen Ahmad (Google) & Farhan Thawar (Shopify), Google Cloud Next '26. Source for the three-layer inversion (reasoning engine, orchestration, trust fabric), the Make My Trip / Spanner case, the Strait of Hormuz scenario, the agentic harness analogy, the two-out-of-three rule, the Deutsche Telekom RAN guardian, and Shopify's River + Pulse systems.